Overview: Realistic timeline expectations for AI software projects of different scopes — and the factors that most commonly cause delays.

The Timeline Question Everyone Asks

When business leaders first engage with AI development teams, timeline is almost always one of the first three questions. The honest answer is: it depends enormously on the scope of the problem, the state of your data, and the performance thresholds you need to hit. That said, there are useful benchmarks.

One important framing to carry into any AI project timeline discussion: AI development is iterative, not linear. Unlike building a website, where you can define a final deliverable upfront and measure progress against a fixed spec, AI development involves cycles of experimentation. Finding out that your initial modeling approach underperforms is not failure — it is the process working as designed. Teams that build buffer for these discovery cycles are the ones that hit their overall delivery dates.

Timeline Benchmarks by Project Type

Proof of Concept: 4 to 10 Weeks

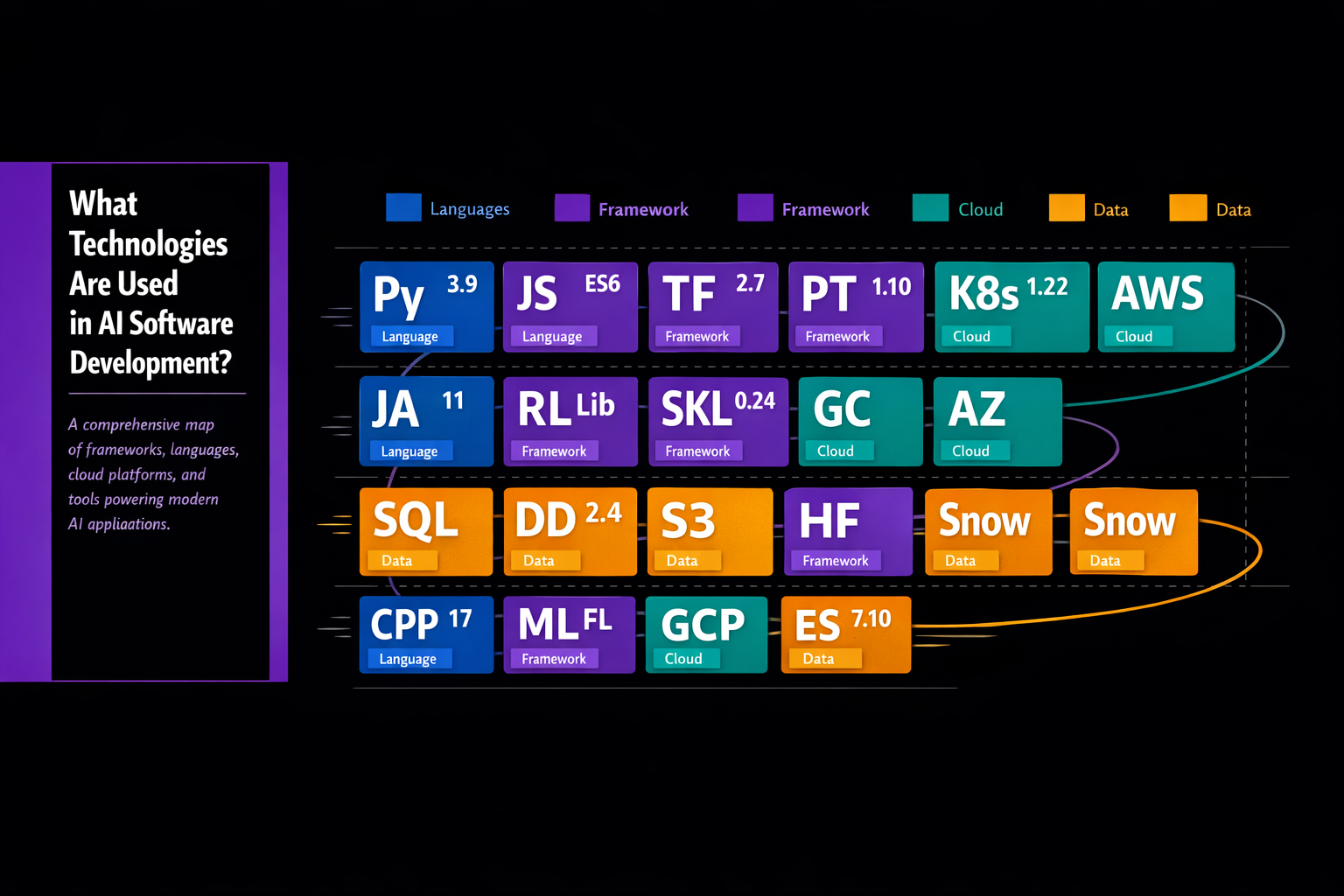

A well-scoped POC with reasonably clean data and an experienced team can move quickly. The primary activities are data exploration, feature engineering, model selection, training, and evaluation. If the data turns out to be in worse shape than anticipated — a common occurrence — add two to four weeks for cleaning and preparation.

The POC timeline can stretch significantly if the problem is ambiguous at the start. Spending the first one to two weeks on a formal problem scoping exercise is time well invested — it reduces the risk of spending eight weeks proving that the wrong problem is solvable.

Single-Use-Case Production Application: 3 to 9 Months

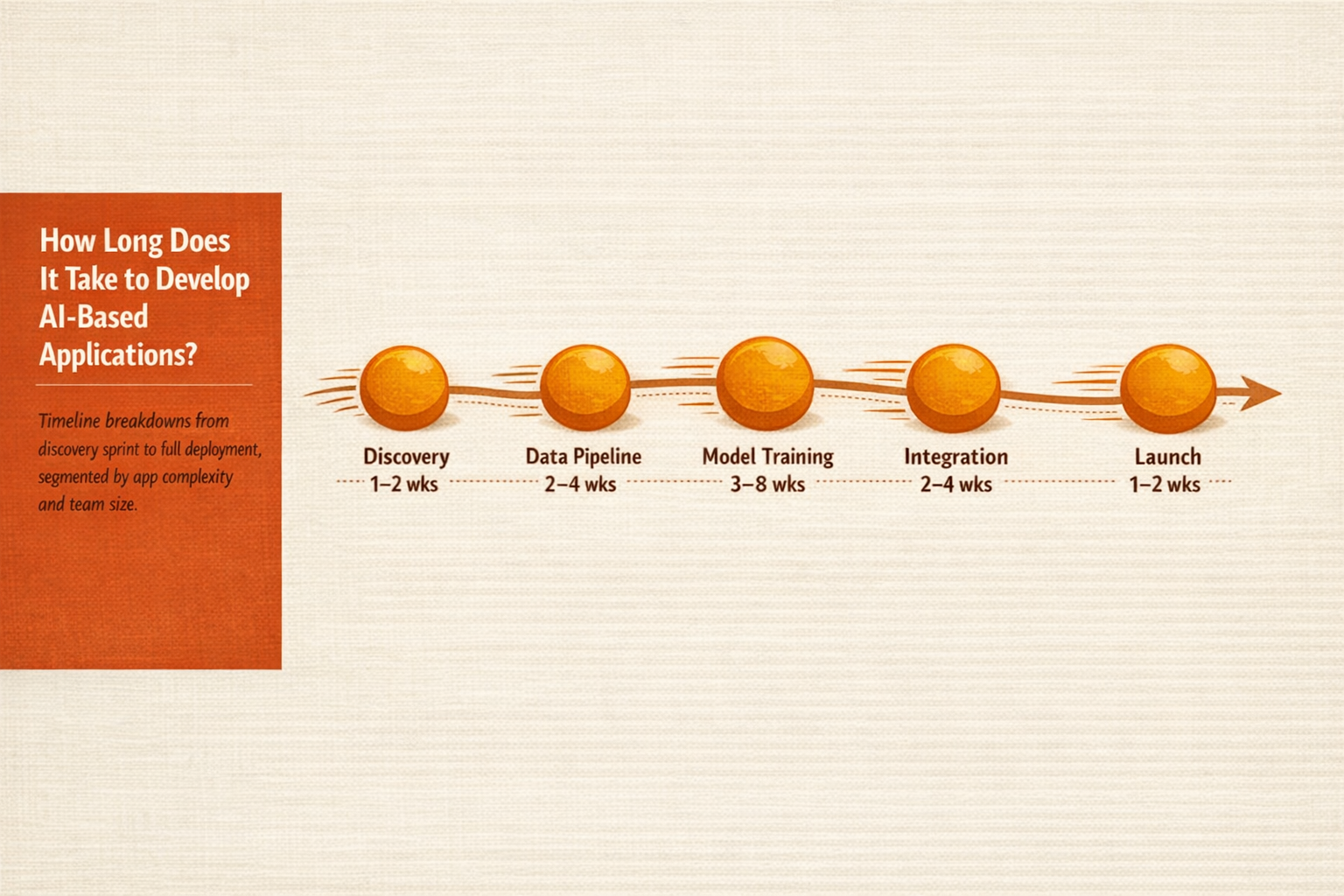

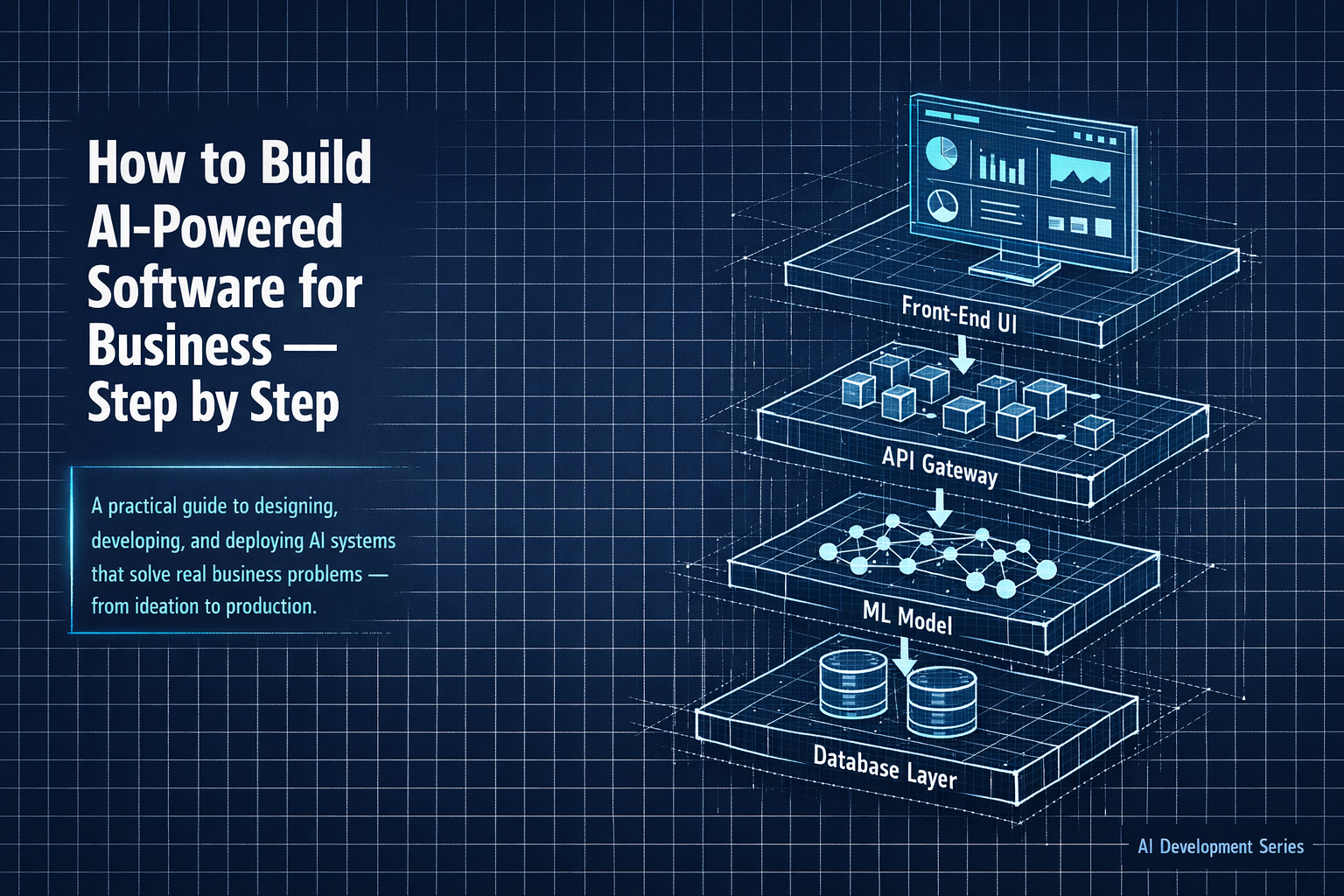

Moving from POC to production adds the following phases, each of which takes real time: data pipeline engineering (connecting the model to live production data), integration with existing systems, security review, user interface development, quality assurance testing, deployment, and initial monitoring setup.

Three months is achievable for a narrowly scoped application with clean data, a modern API-based integration target, and no complex compliance requirements. Nine months is more realistic for an application with significant data engineering work, legacy system integration, and regulatory sign-off requirements.

Enterprise Multi-Use-Case Platform: 12 to 36 Months

Large-scale AI programs are not single projects — they are portfolios of initiatives executed in parallel and sequentially. A realistic 18-month roadmap might deliver three to five distinct AI capabilities, each with its own development cycle, while simultaneously building shared data infrastructure and governance frameworks that accelerate future work.

Organizations that try to compress this timeline by cutting corners on data infrastructure or governance consistently pay the price later, in the form of brittle models, compliance issues, and the inability to scale.

What Causes AI Projects to Run Long

Based on patterns observed across many AI implementations, these are the most consistent causes of timeline delays:

- Data surprises — The actual state of the data is worse than what was reported during scoping. Duplicate records, undocumented schema changes, labels that turn out to be unreliable, and insufficient historical depth are all common discoveries.

- Scope creep — Stakeholders add requirements mid-project. In AI development, even small additions to the problem scope can require retraining the model from scratch. Rigorous change control is essential.

- Integration complexity underestimation — The systems the AI application needs to connect with turn out to be more complex, more brittle, or more poorly documented than anticipated.

- Performance targets moving — The accuracy threshold gets raised after initial model results come in, triggering additional training and evaluation cycles.

- Organizational delays — Security reviews, legal sign-offs, procurement processes, and stakeholder alignment take longer than planned. These delays are particularly common in regulated industries and large enterprises.

How to Keep Your Project on Track

- Invest in a thorough data audit during the scoping phase — it is the single most reliable way to de-risk the timeline

- Agree on performance thresholds before development begins, and treat them as contractual commitments from both sides

- Build explicit iteration buffers into the project plan — assume the first model will not meet targets and budget time to improve it

- Identify all integration points early and get technical documentation from system owners before the engineering team needs it

- Assign a dedicated internal project owner with authority to make decisions and unlock resources — projects with diffuse ownership consistently run longer

Realistic Expectation: A production-ready AI feature that genuinely adds value typically takes four to six months of focused work for an experienced team, even for relatively straightforward use cases. If a vendor quotes less than eight weeks for full production delivery of a novel AI application, ask very detailed questions about their assumptions.